The last post here mused on the connection between (but also, distinctness of) the scientific goals of "understanding" and "prediction". An additional goal of science is "description", the attempt to define and classify phenomenon. Much as understanding and prediction are distinct but interconnected, it can be difficult to separate research activities between description and understanding. Descriptive research is frequently considered preliminary or incomplete on its own, meant to be an initial step prior to further analysis. (On the other hand, the decline of more descriptive approaches such as natural history is often bemoaned). With that in mind, it was interesting to see several recent papers in high-impact journals that rely primarily on descriptive methods (especially ordinations) to provide generalizations. It's fairly uncommon to see ordination plots as the key figure in journals like Nature or The American Naturalist, and it opens up the question of 'when do descriptive methods exceed description and provide new insights & understanding?'

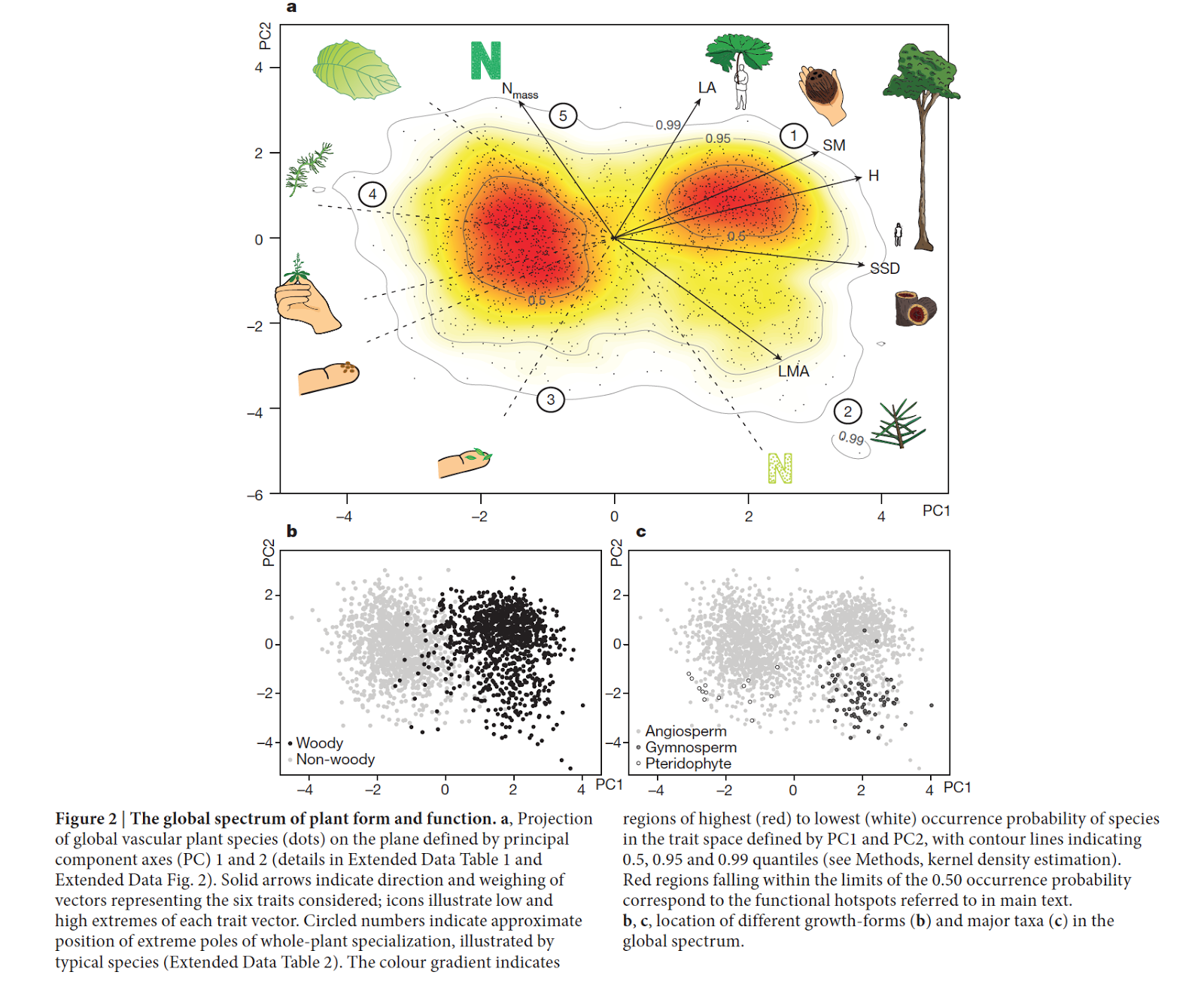

For example, Diaz et al.'s 2016 Nature paper took advantage of a massive database of trait data (from ~46000 species) to explore the inter-relationships between 6 ecologically relevant plant traits. The resulting PCA plot (figure below) illustrates, across many species, that well-known tradeoffs between a) organ size and scaling and b) the tissue economic spectrum appear fairly universal. Variation in plant form and function may be huge, but the Diaz et al. ordination highlights that it still is relatively constrained, and that many strategies (trait combinations) are apparently untenable.

|

| From Diaz et al. 2016. |

|

| From Winemiller et al. 2017 |

Finally, a new TREE paper from Daru et al. (In press) attempts to identify some of the processes underlying the formation of regional assemblages (what they call phylogenetic regionalization, e.g. distinct phylogenetically delimited biogeographic units). They similarly rely on ordinations to take measurements of phylogenetic turnover and then identify clusters of phylogenetically similar sites. Daru et al.'s paper is slightly different, in that rather than presenting insights from descriptive methods, it provides a descriptive method that they feel will lead to such insights.

Part of this blip of descriptive results and methods may be related to a general return to the concept of multidimensional or hypervolume niche (e.g. 1, 2). Models are much more difficult in this context and so description is a reasonable starting point. In addition, the most useful descriptive approaches are like those seen here - where new data or a lot of data (or new techniques that can transform existing data) - are available. In these cases, they provide a route to identifying generalization. (This also leads to an interesting question – are these kind of analyses simply brute force solutions to generalization? Or do descriptive results sometimes exceed the sum of their individual data points?)

References:

Díaz S, Kattge J, Cornelissen JH, Wright IJ, Lavorel S, Dray S, Reu B, Kleyer M, Wirth C, Prentice IC, Garnier E. (2016). The global spectrum of plant form and function. Nature. 529(7585):167.Eric R. Pianka, Laurie J. Vitt, Nicolás Pelegrin, Daniel B. Fitzgerald, and Kirk O.Winemiller. (2017). Toward a Periodic Table of Niches, or Exploring the Lizard Niche Hypervolume. The American Naturalist. https://doi.org/10.1086/693781

Barnabas H. Daru, Tammy L. Elliott, Daniel S. Park, T. Jonathan Davies. (In press). Understanding the Processes Underpinning Patterns of Phylogenetic Regionalization. TREE. DOI: http://dx.doi.org/10.1016/j.tree.2017.08.013